Why We're Small, and Why That's Your Advantage

At SafeLLM, 'we care about every customer' isn't a marketing slogan. It's our business model. Here's what boutique enterprise support actually means.

At SafeLLM, 'we care about every customer' isn't a marketing slogan. It's our business model. Here's what boutique enterprise support actually means.

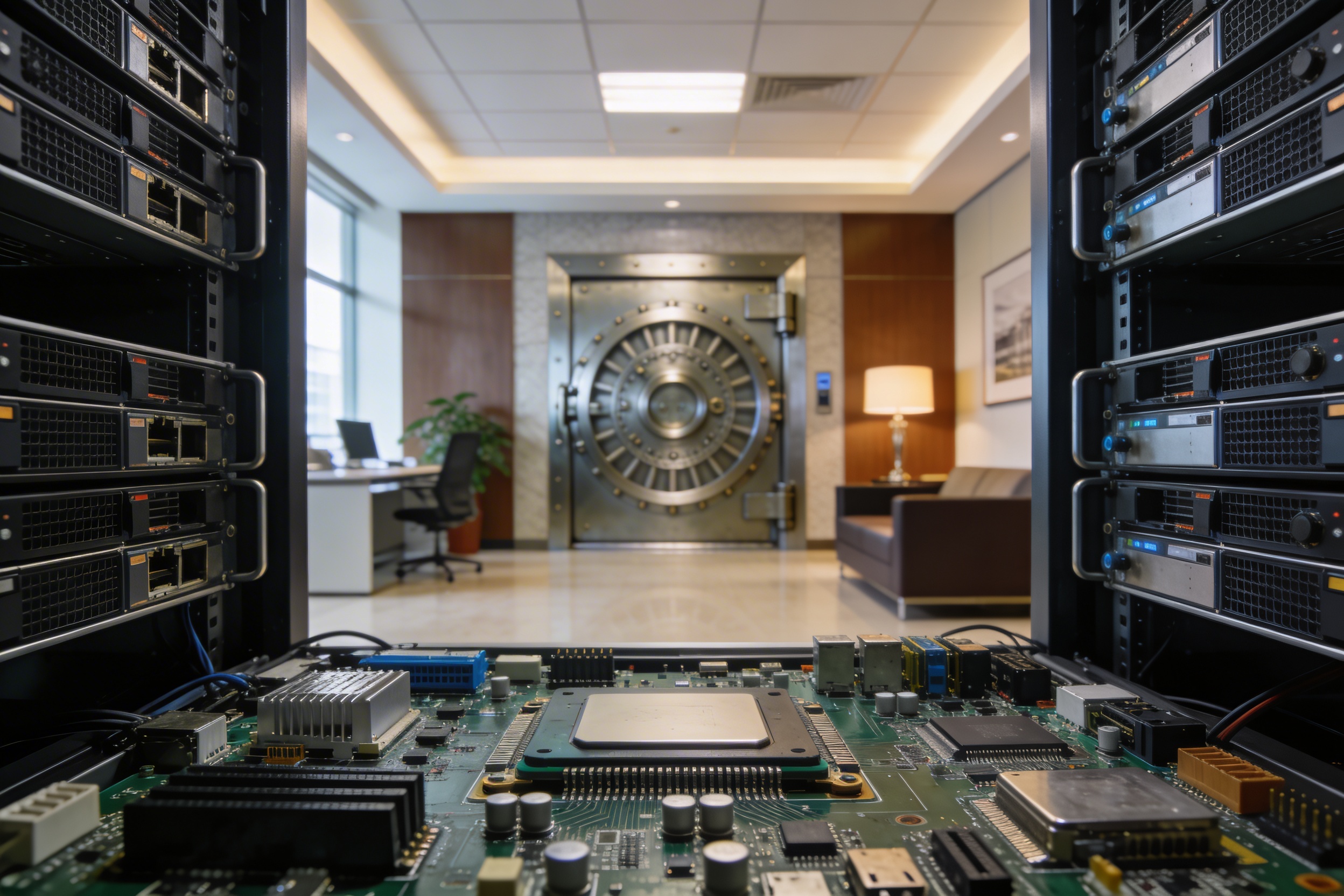

There was no AI security plugin for Apache APISIX. Now there is. Introducing SafeLLM — 100% air-gapped, CPU-ready, GDPR-compliant protection for your LLMs.

Why 100% offline deployment matters for banks, defense, and enterprises. And why you don't need GPU infrastructure for production-grade LLM protection.

How to prevent sensitive data from reaching cloud LLM providers. A practical guide to PII detection and anonymization in AI workflows.

An 80% cache hit rate does not mean 80% cost reduction. Here is an honest breakdown of what caching saves, what it costs to operate, and when you should not cache at all.