· Enterprise · 2 min read

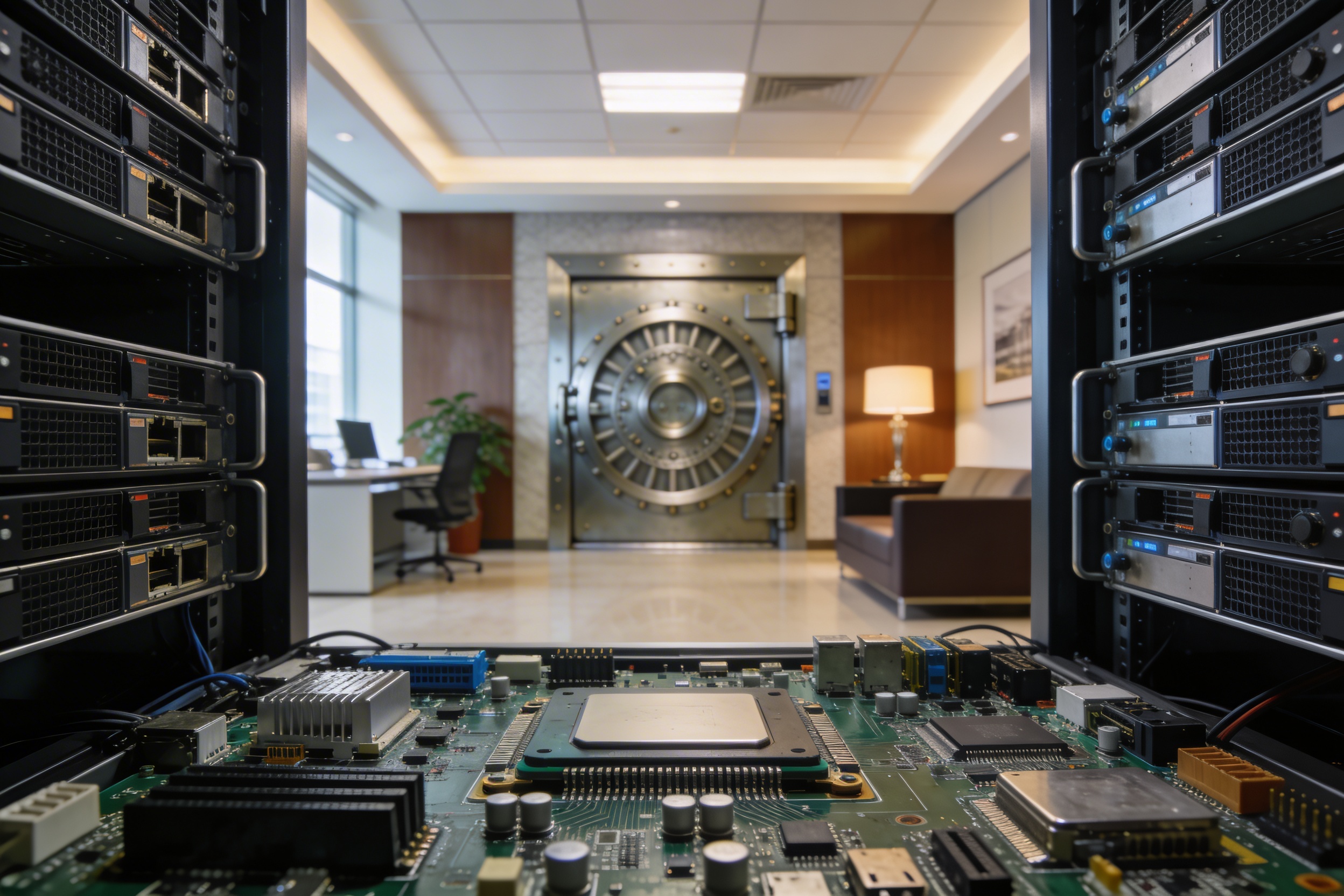

Air-Gapped & CPU-Only: Enterprise AI Security Without Compromises

Why 100% offline deployment matters for banks, defense, and enterprises. And why you don't need GPU infrastructure for production-grade LLM protection.

The Cloud Security Paradox

Here’s the irony: you’re trying to secure your AI workloads, but most security solutions require you to send data to someone else’s cloud.

- OpenAI Moderation API? Your prompts go to OpenAI.

- Cloud AI gateways? They see everything.

- Even some “enterprise” solutions phone home for telemetry.

For many organizations, this is a non-starter.

Who Needs True Air-Gap?

- Banks and financial institutions — regulators require data to stay on-premises

- Defense and government — classified networks have no internet access

- Healthcare — HIPAA compliance often means network isolation

- Tech giants (think Apple-level paranoia) — they build everything in-house for a reason

If your security posture requires that zero bytes leave your network, SafeLLM is built for you.

100% Offline: What It Actually Means

When we say SafeLLM runs air-gapped, we mean:

- All AI models are local — ONNX and GLiNER models ship with the container

- No license servers — no call-home for activation or telemetry

- No external dependencies — DNS, NTP, package repos — nothing required

- Audit logs stay local — write to your Loki/S3, not ours

You can literally run SafeLLM on a submarine with no satellite link. (We haven’t tested this, but architecturally it works.)

The GPU Question

“But AI requires GPU, right?”

Not for SafeLLM. Our ONNX models are optimized for CPU inference:

| Metric | CPU-Only Performance |

|---|---|

| Latency | <16ms (P95 < 20ms) |

| Throughput | 1,200+ RPS |

| Detection Accuracy | >95% |

| Hardware Required | Standard server CPU |

This means:

- No NVIDIA drivers — the #1 source of deployment issues

- No CUDA debugging — ever

- No special instances — use your existing fleet

- Simpler K8s manifests — no GPU resource requests

- Lower costs — CPU instances are cheaper everywhere

When You DO Want GPU

If you need state-of-the-art detection rates and have GPU infrastructure, SafeLLM Enterprise supports:

- Guard-class models (Llama, Gemma, Qwen architectures)

- Custom fine-tuned models for your specific threat landscape

- Hybrid deployment — GPU for L2, CPU for L0-L1.5

But this is optional. Most enterprises are perfectly served by CPU-only deployment.

Deployment Example

# Pull the container (one-time, can be done via USB for air-gap)

docker pull safellm/safellm-enterprise:latest

# Run completely offline

docker run -d \

--network none \ # Literally no network access

-v /path/to/models:/models \

-v /path/to/config:/config \

safellm/safellm-enterprise:latestFor Kubernetes, we provide Helm charts that work in fully isolated clusters.

The Bottom Line

- Air-gapped: Your data never leaves your network. Period.

- CPU-only: Production-grade performance without GPU complexity.

- Turn-key: Deploy in minutes, not weeks.

This is what enterprise actually needs — not marketing buzzwords about “enterprise-ready,” but actual deployment patterns that work in regulated environments.

Ready to secure your air-gapped AI infrastructure? Book a technical deep-dive →